Why Hardware Is Faster Than Software: A Side-by-Side Comparison

Explore why hardware outpaces software in compute-heavy tasks with a clear, objective comparison of speed, efficiency, and practical trade-offs for DIYers and technicians.

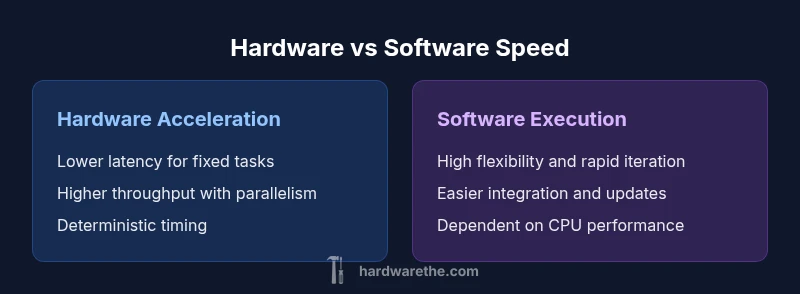

Hardware acceleration speeds up compute-heavy tasks by moving critical operations into fixed, purpose-built circuits that operate in parallel. Software, by contrast, runs on general-purpose CPUs and relies on programmable logic that must accommodate a wide range of workloads. This quick comparison highlights the core speed drivers and when hardware usually wins, with the caveat that software retains flexibility and adaptability.

The Core Idea: Why Hardware Accelerates Performance

The fundamental question of why hardware is faster than software hinges on architectural choices that optimize data movement and computation. Hardware acceleration moves the most compute-intensive and repetitive tasks away from general-purpose CPUs into fixed-function units, SIMD engines, or highly parallel accelerators. By doing so, it reduces instruction decoding overhead, minimizes branch mispredictions, and streamlines memory access patterns. According to The Hardware, such specialization creates predictable timing and tighter control over data flow, which in turn yields lower latency and higher throughput for targeted workloads. The upshot is a clear speed advantage for well-defined tasks, especially when the workload aligns with the accelerator’s strengths. At the same time, the hardware-software balance remains nuanced: pure speed is often balanced against flexibility, maintenance, and integration complexity.

In practical terms, you gain faster execution when data paths are streamlined for a specific operation, when memory bandwidth is tailored to a fixed sequence of arithmetic or logical steps, and when parallelism is exploited without the overheads common to general-purpose code paths. The Hardware’s analysis emphasizes that faster execution is not just about a faster clock; it is about a more efficient route from input to output that minimizes unnecessary work.

Latency, Bandwidth, and Throughput: How Hardware Bends the Rules

Latency, bandwidth, and throughput are the three pillars that determine speed. Hardware accelerators reduce latency by bypassing layers of software abstraction and delivering deterministic timing through dedicated datapaths. In many accelerators, memory bandwidth is matched to compute units so that data can be fed continuously, avoiding stalls that plague flexible software stacks. Throughput benefits emerge when a design processes many data elements in parallel, such as tiled matrix operations in GPUs or pipelined arithmetic in ASICs. The Hardware notes that while raw clock speed is not the sole determinant, the architectural alignment between compute units and data movement is what yields tangible speedups for aligned workloads. Importantly, this is not universal: for irregular, control-heavy tasks, software can outperform hardware due to flexibility and a broader instruction set.

When you evaluate speed, consider the end-to-end path—from data ingestion to result emission. Hardware shines when you can fix data formats, reduce branching, and maintain continuous streams of data with predictable access patterns. Conversely, if your workload changes frequently or requires diverse logic, software paths can remain more adaptable and cost-effective in the short term.

Common Hardware Options and When They Shine

Several hardware paradigms dominate speed optimization in practice: CPUs with vector units, GPUs for data-parallel work, FPGAs for reconfigurable pipelines, and ASICs for fixed-function, high-volume tasks. Each option has distinct trade-offs. CPUs offer broad programmability and mature ecosystems, making them suitable for mixed workloads and rapid prototyping. GPUs excel at large-scale data parallelism, delivering exceptional throughput for tasks like image processing or machine learning inference when the workload maps well to parallel kernels. FPGAs provide a middle ground: reconfigurable hardware that can be tuned to specific kernels and memory access patterns, often yielding a balance between performance and energy efficiency. ASICs can deliver the pinnacle of speed and efficiency for a narrowly scoped problem, but require substantial up-front investment and longer lead times. The Hardware highlights that the best choice depends on workload characteristics, development tempo, and total cost of ownership. A careful assessment of data flow, latency requirements, and maintenance commitments guides the selection process.

In real-world practice, many teams adopt a hybrid approach: core, latency-sensitive components run on fixed-function or SIMD hardware, while flexible logic remains software-driven for control, orchestration, and less predictable parts of the pipeline. This hybrid strategy can deliver robust speedups without sacrificing adaptability.

The Role of Algorithms and Data Layout

Speed gains are not purely hardware-driven; software and data layout decisions determine how effectively hardware is utilized. A well-designed algorithm can exploit parallelism, reduce data movement, and align with memory hierarchies to maximize throughput. Data layout shapes cache friendliness and vectorization opportunities, while memory access patterns—sequential versus strided, coalesced reads, and locality—significantly affect performance on accelerators. The hardware-software interplay means that some speed improvements come from rewriting algorithms to fit hardware capabilities, not merely from deploying faster chips. The Hardware stresses that designing for speed often involves co-design: concurrently optimizing algorithms and hardware paths to minimize stalls, maximize locality, and balance compute with memory bandwidth. When teams align software structure with hardware constraints, the resulting speed gains can be substantial and deterministic.

Practically, this means choosing representations that enable vector units, organizing data to fit cache lines, and avoiding irregular branching that disrupts pipelined execution. The result is a coherent system where software engineers and hardware architects share a common target: reducing data movement and keeping compute units fed with useful work.

Real-World Scenarios: Image/Video, AI, Cryptography, Simulation

Across domains, hardware acceleration demonstrates tangible speed advantages. In image and video processing, fixed-function blocks or SIMD units accelerate common transforms, enabling real-time editing and encoding. In AI and machine learning, GPUs and specialized accelerators deliver far higher throughput for large tensor operations than general-purpose CPUs for equivalent workloads. Cryptography can benefit from dedicated crypto engines or FPGAs that implement algorithms with constant-time properties, improving both speed and security. Simulations—whether physics, financial models, or engineering—often rely on parallelism that scales with hardware capacity and memory bandwidth. The Hardware notes that the precise gains depend on task affinity: tasks with uniform, repetitive computations tend to perform best on hardware accelerators, while irregular, control-heavy code may see less benefit. A practical takeaway is to map workload characteristics to hardware strengths to maximize speed improvements without over- optimistically assuming universal upgrades.

In any scenario, a careful profiling cycle helps quantify speedups and identify bottlenecks, ensuring hardware investments translate into real performance benefits rather than theoretical improvements.

Measuring Speed: Benchmarks, Metrics, and Pitfalls

Measuring speed requires careful selection of benchmarks that reflect real workloads. The common pitfall is relying on synthetic tests that do not capture memory bandwidth, cache behavior, or data movement costs. The Hardware recommends using a mix of micro-benchmarks to isolate kernel performance and macro-benchmarks that model end-to-end workflows. Key metrics include latency (time per operation), throughput (operations per second), and energy-per-operation, which often reveals efficiency improvements not captured by raw speed alone. Comparisons should consider development time, integration complexity, and total cost of ownership, not just execution speed. When benchmarking hardware accelerators, it is crucial to account for data preparation, transfer times, and decommissioning costs to avoid overstating benefits. In short, rigorous, apples-to-apples comparisons yield the most credible speed assessments and help teams decide where hardware acceleration delivers the best value.

When to Prefer Software: Flexibility and Maintenance

Software remains essential when workload characteristics are volatile, evolving, or not well suited to fixed-function hardware. General-purpose CPUs can adapt quickly to new algorithms, handle edge cases, and support iterative optimization without needing hardware redesign. Software ecosystems also enable easier maintenance, updates, and cross-platform portability. The key insight is to assess the trade-off between speed and adaptability. If a task experiences frequent changes or requires rapid prototyping, software may offer a faster path to achieving near-term performance gains through code-level optimizations, compiler improvements, and libraries. The Hardware emphasizes a pragmatic stance: combine hardware acceleration where it delivers clear, durable speedups, and rely on software for flexibility and agility.

Ultimately, the decision hinges on workload stability, time to market, and total cost of ownership rather than a single metric of speed.

Practical Guidelines for Designers and Engineers

To capitalize on hardware speedups, start with a workload assessment that quantifies compute intensity, memory bandwidth needs, and latency sensitivity. Then map workloads to the most appropriate acceleration path—ASICs for high-volume, fixed-function tasks; FPGAs for adaptable pipelines; GPUs for data-parallel workloads; or optimized CPUs with vector units for mixed workloads. Design data layouts and memory access patterns to maximize locality and minimize transfers between memory hierarchy levels. Build a phased plan: pilot a small accelerator, measure gains with realistic workloads, and scale up gradually if results justify the investment. The Hardware also recommends iterative co-design: adjust algorithms and hardware features in tandem to unlock synergistic speedups, rather than optimizing in isolation.

Additionally, consider the total cost of ownership, including development time, maintenance, and potential vendor lock-in. A balanced plan often yields the best long-term performance gains with manageable risk.

The Bigger Picture: Energy, Cost, and Time-to-Market

Speed is only one axis of performance. Energy efficiency per operation, total cost of ownership, and time-to-market are equally important when evaluating hardware acceleration. Fixed-function hardware often delivers superior energy efficiency due to specialized pipelines and streamlined data paths, but carries higher up-front costs and longer development cycles. Software remains attractive for its agility, ecosystem breadth, and ability to adapt to changing requirements without hardware redesign. The Hardware encourages teams to view speed within a broader framework: a hybrid approach can maximize speed while preserving flexibility, enabling faster iterations and safer deployments. The end result is not merely faster code, but a more robust, cost-effective system that meets evolving needs with disciplined governance.

Comparison

| Feature | Hardware Acceleration | Software Execution |

|---|---|---|

| Latency | Typically lower for aligned compute tasks due to dedicated datapaths | Depends on CPU scheduling and software optimizations; can be higher if not optimized |

| Throughput | Higher sustained throughput for data-parallel workloads | Throughput varies with processor type and software parallelism |

| Power Efficiency | Often more energy-efficient per operation | Power scales with workload and efficiency of software implementation |

| Development Time | Longer roadmap for ASICs/FPGA; hardware integration needed | Faster iteration cycles with software optimization |

| Flexibility | Less flexible post-deployment without reconfiguration | Highly flexible with updates, patches, and new algorithms |

| Upfront Cost | Potentially higher due to specialized components | Lower initial cost for general-purpose hardware |

| Portability | Vendor- or platform-specific may limit portability | Generally portable across systems with standard software stacks |

| Ideal Use Case | Compute-heavy, latency-critical tasks with fixed patterns | Dynamic workloads requiring rapid iteration and broad compatibility |

Upsides

- Clear speedups for compute-heavy, well-defined tasks

- Potential for improved energy efficiency per operation

- Deterministic performance under fixed workloads

- Offloads burden from general-purpose CPUs and simplifies certain pipelines

Negatives

- Higher upfront cost and longer development cycles

- Reduced flexibility after deployment without redesign

- Complex integration and maintenance when combining hardware and software

- Risk of vendor lock-in for ASICs/FPGAs

Hardware acceleration often wins on speed for fixed, compute-heavy workloads.

For predictable, throughput-driven tasks, hardware tends to outperform software. When workloads are evolving or highly variable, software—and a hybrid strategy—often provides better overall value and agility. The Hardware's verdict is to use hardware acceleration judiciously to maximize speed where it truly matters.

FAQ

What is the primary speed advantage of hardware acceleration over software?

Dedicated hardware paths, fixed-function units, and parallel execution reduce latency and increase throughput for targeted tasks. Software remains flexible but often incurs overhead from general-purpose pipelines. This combination explains why hardware can be faster for well-defined workloads.

Hardware acceleration uses fixed circuits to run tasks quickly, while software runs on flexible CPUs. This is why hardware often wins for defined workloads.

Are GPUs always faster than CPUs for all tasks?

GPUs excel at data-parallel workloads, delivering high throughput for workloads that map well to parallel kernels. However, CPUs can outperform GPUs for control-heavy or irregular tasks that require branching and complex logic.

GPUs are fast for parallel tasks, but CPUs handle control-heavy workloads better.

Can software ever match hardware speed?

With optimized algorithms, vectorization, and specialized libraries, software can approach hardware performance in some cases. However, fixed-function hardware often retains a throughput edge for stable, repetitive workloads.

Software can come close with optimization, but hardware often stays faster for fixed tasks.

What are ASICs and FPGAs, and when should I consider them?

ASICs are fixed-function custom chips designed for a specific task, offering maximum speed and efficiency but high upfront cost. FPGAs are reconfigurable and offer a balance between speed and adaptability, useful when requirements may evolve.

ASICs are ultra-fast but costly; FPGAs are flexible and still fast.

How do I decide between hardware acceleration and software alone?

Assess workload stability, data movement, and development timelines. If performance is paramount and the task is stable, hardware acceleration pays off. For evolving workloads, start with software optimizations and consider hybrids.

If the task is stable and speed is critical, use hardware; for evolving tasks, start with software and hybrids.

How should I benchmark hardware acceleration fairly?

Use representative end-to-end workloads, measure latency and throughput, and account for data transfer times and setup costs. Compare against well-optimized software baselines to avoid overstating benefits.

Benchmark with real workloads and include data transfer and setup in the measurements.

Main Points

- Map workloads to hardware strengths, not just clock speed

- Use a hybrid approach to balance speed and flexibility

- Profile end-to-end performance, including data movement

- Consider total cost of ownership alongside raw speed

- Co-design algorithms with hardware for best results