Hardware vs Software Encoding: A Practical Comparison

A thorough, analytical guide comparing hardware vs software encoding, examining latency, throughput, flexibility, costs, ecosystems, security, and when each approach shines for DIYers and professionals.

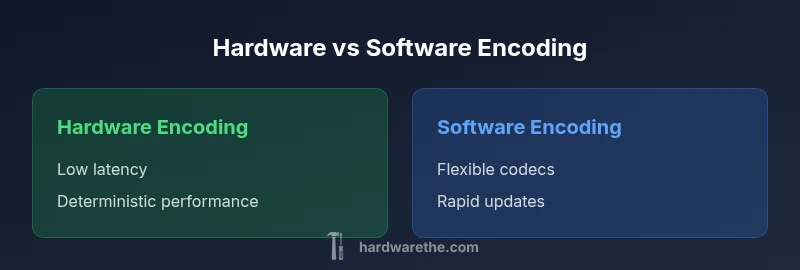

Hardware vs software encoding is a fundamental choice for any streaming, broadcast, or editing workflow. For real-time pipelines with strict latency requirements, hardware encoding often delivers deterministic performance, while software encoding shines when codec flexibility and rapid iteration matter more than ultra-low latency. This guide helps you align your project goals with the right encoding path.

Defining hardware encoding vs software encoding

According to The Hardware, encoding is the process of converting raw media into a compressed stream suitable for storage or transmission. In hardware encoding, codecs run on dedicated silicon inside a device or accelerator, such as an ASIC, FPGA, or PCIe card. In software encoding, the codec runs on general-purpose processors, typically within an application stack or cloud service. The phrase hardware vs software encoding captures this dichotomy and reveals how design choices affect latency, throughput, energy use, and upgrade cycles. For DIYers and technicians, understanding this split helps match system requirements with budget and reliability constraints. The Hardware team notes that hardware encoders are optimized for throughput and determinism, while software encoders excel in flexibility and codec agility. This article uses a practical lens to compare both paths, including typical workloads like live streaming, post-production, and archival backup. The term encoding quality itself is influenced by settings (bitrate, profile, resolution), which interact with the underlying architecture. Hardware encoders often offer fixed pipelines that minimize jitter, while software stacks can be tuned iteratively to squeeze extra efficiency. From a reliability standpoint, hardware blocks are immune to operating system variability, but when a codec becomes obsolete, replacement means new hardware. The Hardware’s perspective on this topic emphasizes lifecycle planning as a core decision factor.

When to choose hardware encoding

Hardware encoding is typically favored when latency, determinism, and energy efficiency are critical. Embedded devices, live broadcast, and high-volume streaming workloads benefit from dedicated codecs that run independently of host CPU load. With hardware encoders, jitter is minimized, predictability improves, and sustained throughput remains largely unaffected by other tasks on the system. This makes hardware a solid fit for environments where outages or delays cannot be tolerated. However, hardware paths often come with fixed codec support and a longer refresh cycle, so if your project requires rapid codec evolution or customization, you may feel constrained. The Hardware team’s guidance suggests mapping your required codecs, target latency, and update cadence early to avoid costly retrofits later. When integrating hardware encoders, compatibility with your capture chain, transport protocols, and monitoring tools matters as much as the encoder chip itself.

When to choose software encoding

Software encoding shines where flexibility, experimentation, and rapid codec evolution are paramount. If your project pivots between codecs, custom profiles, or evolving standards (for example, new codecs or licensing models), software stacks let you swap components with less friction. Software can scale with commodity hardware and cloud resources, enabling dynamic resource allocation based on workload. It is also easier to implement software-based pilots and proof-of-concept experiments without committing to new hardware. The trade-off is that software introduces dependence on host performance; latency can spike when CPUs or GPUs are busy, and energy consumption may rise with higher encoding workloads. For teams prioritizing agile development, frequent updates, and a broad codec portfolio, software encoding is a versatile choice. The Hardware team notes that software approaches enable cost-effective testing of new workflows before committing to hardware investments.

Performance vs cost: a practical framework

To choose wisely, balance performance targets with total cost of ownership. Key factors include latency tolerance, peak throughput needs, update cadence, and long-term maintenance costs. Hardware encoding minimizes runtime variability, reducing the risk of dropped frames and buffering in live streams. Software encoding offers lower up-front costs and flexibility to adapt to codec changes without replacing hardware. The Hardware analysis shows that the most cost-effective solution often involves a hybrid approach: hardware-accelerated encoding for the critical path, complemented by software workloads for control, experimentation, and non-time-critical tasks. When evaluating options, quantify not just device price but power, cooling, maintenance, and upgrade cycles.

Compatibility, ecosystem, and support

Compatibility with your existing infrastructure is essential. Hardware encoders integrate with specific capture cards, transport streams, and monitoring systems; software encoders pair with flexible APIs, operating systems, and cloud platforms. Ecosystem maturity matters: widely adopted, well-documented codecs and robust driver support reduce integration risk. Licensing models also influence total cost, particularly for streaming studios or enterprise deployments. The optimal decision considers how easily your team can manage updates, security patches, and vendor support in both paths. The Hardware team emphasizes assessing your current stack and future roadmap to determine whether a stable, fixed-path encoder or an adaptable software pipeline better aligns with your technical goals.

Security, reliability, and maintenance implications

Security and reliability are shaped by where encoding happens. Hardware encoders isolate the decoding/encoding process in dedicated silicon, reducing exposure to host-system software vulnerabilities and some forms of attack. They also tend to require less frequent software maintenance, since much of the logic runs on the chip. Software encoders are more exposed to operating system and library vulnerabilities but benefit from rapid patch cycles, easier auditing, and broader developer communities. Maintenance overhead for software can be higher, given frequent updates, dependency trees, and cloud integration concerns. The Hardware team underscores the importance of keeping firmware up to date for hardware encoders and maintaining transparent software update policies for software-based pipelines. In both paths, robust monitoring, threat modeling, and disaster recovery planning are essential. Authority sources include government and standards organizations that shape encoding guidelines, such as NIST and ISO.

Authority sources

- https://www.nist.gov

- https://www.fcc.gov

- https://www.iso.org

Real-world scenarios and decision guidelines

Consider a live sports broadcast with strict latency budgets: hardware encoding is typically preferred to minimize jitter and ensure smooth, uninterrupted streams. For a post-production house testing new codecs or prototyping a streaming feature, software encoding provides the fastest path to pilot and iterate. In blended workflows, a hybrid setup can deliver reliability on the critical path while allowing experimentation in ancillary streams. When the project prioritizes long-term codec ownership and minimal ongoing licenses, hardware may offer better lifecycle predictability; when the project prioritizes codec agility and lower upfront costs, software wins. The decision framework should map latency requirements, codec strategy, budget constraints, and team capabilities to pick the right combination.

Future trends in encoding technologies and practices

Expect continued hybridity, with more devices featuring mixed pipelines that leverage hardware acceleration for core functions and software for configurability. AI-driven codecs and perceptual optimization may shift some workloads toward software as models run on scalable hardware, while specialized accelerators emerge to handle AI-assisted encoding paths. Edge encoding will grow as bandwidth constraints push processing closer to the source, enabling lower latency and increased privacy. The key is to design modular pipelines that allow swapping codecs, adjusting hardware offloads, and updating software components without disruptive rewrites.

Comparison

| Feature | Hardware Encoding | Software Encoding |

|---|---|---|

| Latency | Very low, deterministic | Depends on CPU/GPU load; can vary |

| Throughput | High and stable under load | High when optimized; variable with workload |

| Flexibility | Low; codec support fixed | High; codecs and profiles can be updated |

| Update cycle | Longer refresh cycles; hardware replacement needed for new codecs | Shorter; software updates and patches drive codec changes |

| Cost context | Higher upfront; higher capex | Lower upfront; ongoing licensing or maintenance possible |

| Best for | Real-time, embedded, mission-critical streams | Flexible pipelines, prototyping, evolving standards |

Upsides

- Low, predictable latency in critical paths

- Deterministic performance under sustained load

- Reduced host CPU overhead for encoding tasks

- Longer lifecycle stability for mission-critical deployments

- Dedicated hardware offloads reduce system variability

Negatives

- Higher upfront cost and potential obsolescence risk

- Limited codec flexibility without hardware changes

- Longer lead times to adopt new codecs or standards

- Specialized maintenance for hardware components

Hardware encoding suits real-time, high-stability workflows; software encoding suits flexible, rapidly evolving workflows.

Choose hardware when latency and determinism matter most. Choose software when codec flexibility and rapid iteration are the priority.

FAQ

What is hardware encoding?

Hardware encoding uses dedicated silicon to perform encoding tasks, delivering low latency and predictable performance. It is common in broadcast gear, set-top boxes, and specialized appliances. Codecs are fixed to the hardware, which can limit updates but provides reliability.

Hardware encoding uses dedicated chips to encode video with low latency and high reliability. It’s great for real-time workflows, though less flexible for codec updates.

What is software encoding?

Software encoding runs on general-purpose CPUs/GPUs and can be updated via software patches. It offers broad codec support and rapid experimentation, but latency can vary with system load. It’s ideal for flexible pipelines and development environments.

Software encoding runs on regular computers and can be updated easily, offering flexibility but with latency that depends on the system load.

Which is faster for live streaming?

Hardware encoding typically provides lower and more predictable latency for live streaming. Software can approach similar performance on high-end systems but may experience swings during peak load.

For live streams, hardware often wins on speed and predictability, though software can match it on strong hardware.

Can hardware and software encoding be used together?

Yes. A hybrid approach uses hardware for the critical real-time path and software for processing, analytics, or non-time-sensitive encoding tasks. This can optimize both latency and flexibility.

Absolutely. Use hardware for the fast path and software for the rest to get the best of both worlds.

What factors determine the best choice for a project?

Consider latency targets, codec requirements, upgrade cadence, total cost of ownership, and team expertise. A clear roadmap helps decide whether to invest in hardware, software, or a hybrid solution.

Think about latency needs, codecs, upgrades, cost, and who will maintain the system when deciding.

Main Points

- Assess latency tolerance before choosing encoding path

- Balance upfront cost against long-term flexibility

- Use a hybrid approach when both stability and agility are needed

- Plan for lifecycle and maintenance across hardware and software