Hardware vs Software Interrupts: A Practical Guide

Analytical comparison of hardware vs software interrupts, explaining triggers, latency, and design tradeoffs for DIYers and technicians building robust embedded systems.

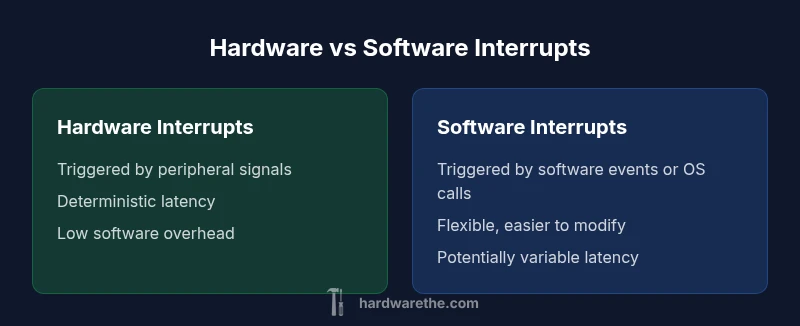

Hardware interrupts are designed for immediate, deterministic responses to peripheral events, while software interrupts offer flexibility and complex signaling but with potential latency variance. The best approach blends both: use hardware interrupts for time-critical tasks and software interrupts to coordinate or handle non-critical signaling. This balance improves real-time performance while preserving programmability.

Understanding Interrupts: The Essentials

Interrupts are a fundamental mechanism that lets a processor momentarily pause its current task to handle a higher-priority event, then resume. This mechanism underpins responsive control loops, sensor polling, and timing-critical responses. In the debate of hardware vs software interrupts, the signal path, where the event originates, and the consequences of a missed signal are essential considerations. According to The Hardware, a practical view starts with three questions: what triggers the interrupt, how quickly can you service it, and what state must be preserved for the rest of the system. Hardware interrupts come from physical lines, such as IRQs connected to peripherals, and are designed to be fast, with deterministic latency. Software interrupts arise from software events, OS signals, or explicit software traps, and offer flexibility for non-real-time tasks but can suffer from scheduling delays. For DIY projects, pairing the right interrupt type with the real-time requirement dramatically improves reliability and power efficiency.

Hardware Interrupts: Triggers, IRQs, and Handlers

Hardware interrupts are driven by external hardware events. When a peripheral needs attention, it asserts a line—an IRQ—causing the CPU to suspend its current instruction flow and jump to an ISR (interrupt service routine). In practice, hardware interrupts are designed to be fast and predictable: the hardware takes care of signaling, and software handles only a short, carefully bounded ISR. Key concepts include masking (disabling interrupts during critical sections), priority levels, and the distinction between edge-triggered and level-triggered signals. For embedded designers, this means mapping peripherals to specific IRQs, ensuring that the ISR is concise, and avoiding long, blocking operations. The Hardware's guidance emphasizes minimizing work inside an ISR and deferring heavy processing to lower-priority tasks or a deferred procedure call. Proper use of interrupt controllers, such as nested vector interrupt controllers, reduces latency and preserves system responsiveness under load.

Software Interrupts: Software Initiated Signals and Exceptions

Software interrupts originate in the software domain, often as deliberate traps, software-generated signals, or OS-managed interrupts. They provide flexibility for complex control flow, signaling between tasks, or invoking kernel-level services without relying on hardware lines. Unlike hardware IRQs, software interrupts can be scheduled, masked, or coordinated with operating system policies, which makes them powerful in multi-tasking environments. However, this flexibility comes at the cost of potential latency variability and added software overhead. Designers should reserve software interrupts for events that do not demand ultra-low, deterministic latency and ensure that the ISR-like software handlers stay lean and quick, with heavy processing deferred to dedicated tasks or background work. The result is a layered interrupt strategy that leverages software interrupts for orchestration while leaving time-critical responses to hardware interrupts.

Interrupt Handling: Context Saving and ISR Design

When an interrupt occurs, the processor saves enough context to resume the interrupted task after the ISR completes. The exact sequence depends on architecture: some CPUs push registers to a hardware stack automatically, while others require explicit saving in the ISR. A well-designed ISR is short, fast, and non-blocking; it should perform only essential work and schedule longer tasks via deferred procedures, a message queue, or a task scheduler. Context saving becomes crucial in ensuring no accidental data corruption occurs when interrupts nest or preempt one another. Designers often use volatile variables, careful memory barriers, and alignment techniques to minimize race conditions. The hardware-software boundary is where most subtle bugs live, so a disciplined approach to register preservation, stack depth, and reentrancy is essential for robust systems.

Latency, Jitter, and Deadlines: Real-Time Considerations

Real-time systems demand predictable interrupt latency and minimal jitter. Latency is the time from an interrupt event to the beginning of the corresponding ISR, while jitter is the variability in that latency across cycles. Hardware interrupts aim for tight, deterministic latency, often bounded by the controller’s design and interrupt priority. Software interrupts introduce scheduling delays, context switches, and potential OS-induced latency, which may be acceptable for non-critical tasks but problematic for hard real-time requirements. When designing, define worst-case latency budgets, consider interrupt nesting limits, and evaluate whether a hardware IRQ path can meet deadlines with a lean ISR. The blended approach—hardware interrupts for critical signals and software interrupts for coordination—helps meet real-time goals without sacrificing flexibility.

Architecture and OS Roles: Microcontrollers, SoCs, and Kernels

Microcontrollers typically employ simple interrupt controllers with fixed priorities and limited nesting. SoCs and general-purpose CPUs may use complex interrupt controllers, programmable priority schemas, and OS-driven interrupt handling. The kernel’s scheduler often interacts with interrupts, enabling or disabling preemption during critical sections and providing mechanisms for deferring work. In bare-metal MCU projects, you rely on the hardware interrupt controller and a concise ISR. In more capable systems, you leverage interrupts in concert with the OS to support robust multi-tasking and predictable timing. The choice of architecture shapes how you structure ISR length, what you do inside the ISR, and how you offload work to lower-priority tasks.

Peripherals and Interrupt Controllers: The Hardware Stack

peripherals like sensors, timers, and communication interfaces rely on dedicated interrupt lines managed by interrupt controllers. A well-designed system maps each periphery to a specific vector, assigns a clear priority, and uses masks to protect critical sequences. Modern controllers offer features such as nested interrupts, wake-up from low-power states, and hardware-assisted context saving. Exploiting these features can improve responsiveness while maintaining energy efficiency. Just as importantly, you must design the software side to react quickly: keep ISR code compact, avoid blocking calls, and use asynchronous processing patterns to keep the main loop responsive.

Comparing Overheads and Implementation Tradeoffs

A core decision in interrupt design is tradeoffs between latency, determinism, and software complexity. Hardware interrupts excel in low, predictable latency and minimal CPU overhead during normal operation, especially when peripherals can trigger precise timing events. Software interrupts offer flexibility for event-driven architectures, easier testing, and simpler portability, but with uncertain latency and potential OS-driven delays. A balanced solution often assigns time-critical sensing and control to hardware interrupts, while using software interrupts to coordinate tasks, signal completion, or handle non-critical housekeeping. In practice, assess the cost of context switches, memory usage, and programming effort to select the most effective mix for your project.

Testing and Debugging Interrupts: Tools and Techniques

Testing interrupts requires dedicated test rigs and careful instrumentation. You should verify ISR length, nesting behavior, masking effects, and the system’s ability to handle simultaneous events. Use hardware simulators, logic analyzers, and oscilloscopes to measure latency from event to ISR entry and from ISR exit to resumption of normal operation. Software interrupts can be exercised with unit tests and deterministic test harnesses that simulate OS scheduling. Debugging tips include keeping ISRs reentrant, avoiding dynamic memory allocation inside interrupts, and validating that deferred processing correctly handles edge cases. Documentation of timing budgets and worst-case scenarios helps future maintenance and upgrades.

Security and Reliability: Risks and Safeguards

Interrupts introduce potential security and reliability risks, such as interrupt storms, priority inversion, or deadlock when ISRs interact with shared resources. Implement robust synchronization primitives, interrupt masking discipline, and clear ownership of resources between ISRs and regular tasks. Secure systems should also consider fault tolerance: ensure that critical interrupts are not dropped under abnormal conditions and provide safe fallback paths when a hardware fault occurs. Regular audits of interrupt paths, combined with failure-mode analysis, help maintain reliability in embedded devices and larger systems alike.

Practical Guidelines: When to Use Hardware vs Software Interrupts

To decide between hardware and software interrupts, map events to urgency and determinism. Use hardware interrupts for time-sensitive signals where missing an event would cause system instability or safety concerns. Reserve software interrupts for coordination, signaling between tasks, and non-time-critical processing that benefits from OS-level features. Always aim for concise ISRs, offload heavy work, and validate timing under worst-case conditions. The Hardware emphasizes aligning design with real-time requirements while preserving flexibility for future changes.

Real-World Scenarios: Projects and Applications

In real projects, engineers often deploy hardware interrupts for critical sensing in automations, such as motor control or safety interlocks, because these require deterministic responses. Software interrupts come into play for user-interface events, background data processing, and orchestration tasks where latency is less critical. A small IoT device might use a hardware timer interrupt to sample sensors at precise intervals and software interrupts to manage network signaling or OTA updates. By combining approaches thoughtfully, you can achieve robust behavior, low power consumption, and maintainable code across a range of hardware platforms.

Comparison

| Feature | Hardware Interrupts | Software Interrupts |

|---|---|---|

| Trigger source | Peripheral hardware line (IRQ) | Software instruction or OS signal |

| Latency | Deterministic, typically low | Variable, tied to scheduling and context switches |

| Context saving | Hardware-assisted save of essential state; typically minimal ISR work | Software-handled context save; may involve OS and thread state |

| ISRs/tools | Low-level (C/assembly); tight, bounded code | Higher-level (kernel/user tasks); can use threading |

| Power/CPU load | Low during idle; quick servicing reduces wake time | Higher potential due to scheduling and task switches |

| Predictability | High predictability with fixed priorities | Less predictable; depends on OS design and workload |

| Best for | Time-critical peripherals and timing loops | Coordination, signaling, and non-time-critical tasks |

Upsides

- Low-latency response for time-critical events

- Deterministic behavior improves system reliability in real-time tasks

- Reduces CPU polling and saves power in embedded systems

- Enables precise peripheral control and timing accuracy

- Can be hardware-optimized for dedicated functions

Negatives

- Can require complex hardware design and careful timing

- Masking interrupts can introduce latency or jitter if misused

- Debugging ISR timing can be challenging

- Software interrupts may complicate portability across architectures

- Over-reliance on hardware interrupts can increase design stiffness

Hardware interrupts are generally preferred for real-time, time-critical signaling; software interrupts excel as flexible helpers for orchestration and non-critical tasks.

Choose hardware interrupts when determinism and fast response are essential. Use software interrupts to coordinate tasks and handle events that tolerate scheduling delays. A hybrid approach typically delivers the best balance of latency, flexibility, and maintainability.

FAQ

What is an interrupt in a processor?

An interrupt is a signal that temporarily stops the current program flow, allowing a higher-priority task to run. After the interrupt service is finished, the processor resumes where it left off. This mechanism enables responsive systems and efficient use of CPU time.

An interrupt tells the processor to pause what it's doing, handle something urgent, then resume.

How do hardware interrupts differ from software interrupts?

Hardware interrupts come from external devices via IRQ lines and are typically fast and deterministic. Software interrupts are generated by software or OS signals and offer flexibility but can have variable latency due to scheduling and context switches.

Hardware interrupts are triggered by hardware; software interrupts are triggered by software and OS actions.

What is an ISR and why is it important?

An ISR is an Interrupt Service Routine. It handles the immediate work required by an interrupt. Designing a good ISR means keeping it short, non-blocking, and deferring heavy work to later tasks to preserve system responsiveness.

An ISR handles the urgent event quickly and then hands off heavy lifting to other parts of the system.

How can I reduce interrupt latency in my design?

Reduce latency by assigning higher priority to critical interrupts, keeping ISRs short, using hardware timers for precise timing, and deferring long processing to non-interrupt context. Analyze worst-case timing to ensure deadlines are met.

Make ISRs short, give priority to critical interrupts, and defer heavy work to after the interrupt.

Can software interrupts replace hardware interrupts completely?

Software interrupts cannot fully replace hardware interrupts in time-critical scenarios because they depend on OS scheduling, which introduces variability. Use software interrupts where flexibility and orchestration are more important than ultra-low latency.

Software interrupts are flexible but don’t beat hardware interrupts for timing precision.

What are common pitfalls in interrupt design?

Common pitfalls include long ISRs, improper masking, priority inversion, and failure to offload work. Thorough testing under load and clear documentation of timing budgets help avoid these issues.

Watch out for long handlers, bad masking, and unpredictable scheduling.

Main Points

- Prioritize determinism for time-critical tasks

- Use software interrupts to manage orchestration and non-critical work

- Keep ISRs short; defer heavy processing to later stages

- Leverage interrupt controllers to manage priority and nesting

- Test timing budgets under worst-case conditions to ensure reliability