Fuzzing hardware vs software: A practical side-by-side comparison

Explore when fuzzing hardware vs software makes sense, how realism, cost, and workflow differ, and how to design a hybrid fuzzing strategy for robust testing.

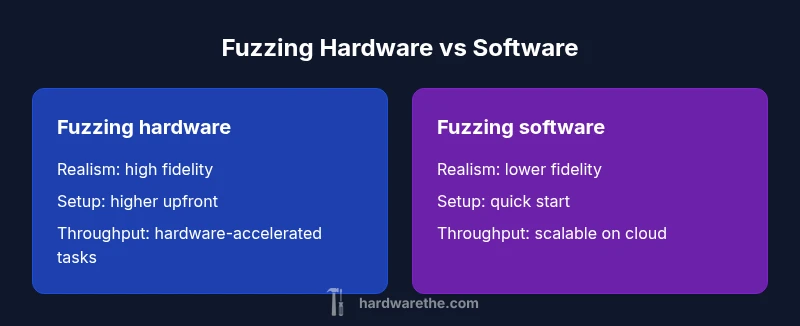

Hardware fuzzing offers realism by testing on actual devices and firmware, but it comes with higher upfront costs and longer setup times. Software fuzzing is cheaper, easier to scale, and quick to start, yet may miss subtle hardware bugs. For many teams, a hybrid approach—starting with software fuzzing and layering hardware tests—delivers balanced coverage.

What fuzzing hardware like software really involves

Fuzzing hardware like software is a concept that aims to extend fuzz testing from pure software targets to the realm of embedded systems, firmware, and hardware interfaces. This approach seeks to replicate real-world operation by feeding varied inputs not just to software layers but to the actual devices, boards, or system-on-chip (SoC) subsystems. According to The Hardware, this methodology is not a blanket replacement for software fuzzing; rather, it is a pragmatic way to close the realism gap when software-only tests fail to surface timing or electrical edge cases. Practically, you’ll need test benches, power supplies, and safe handling protocols to avoid damaging devices. The keyword fuzzing hardware like software keeps creeping into conversations because it captures a broader spectrum of bugs, from timing races to peripheral misconfigurations, that are invisible in simulators. For DIY enthusiasts and professional technicians alike, the goal is to design repeatable experiments that yield actionable insights while maintaining safety and cost discipline.

Realism vs. repeatability: where do they align and diverge?

When you compare fuzzing approaches, realism matters for hardware targets but comes at the cost of repeatability. Fuzzing hardware like software can yield highly realistic results when you test on genuine DUTs (devices under test) under representative power, clock, and environmental conditions. Yet hardware fuzzing often introduces variability: temperature changes, voltage fluctuations, and minor board-to-board differences can muddy results. Software fuzzing, by contrast, excels at repeatability and rapid iteration, enabling you to run thousands of cases in minutes on a host machine. The trade-off is fidelity: inputs that provoke bugs in a simulated environment may not trigger the same faults on real hardware. The upshot is that you’ll frequently see a regime shift—start with software fuzzing for breadth, and migrate to hardware fuzzing for targeted, edge-case verification. The central question becomes: is the goal to maximize fault discovery rate in a controlled lab, or to confirm resilience in real-world usage?

Performance, cost, and operational considerations

Practical decision-making around fuzzing approaches hinges on three axes: realism, cost, and operational burden. The Hardware analysis shows that hardware-based fuzzing typically entails higher upfront investments (test rigs, power management, safety controls) but can amortize across many test cycles by delivering higher failure discovery in real devices. Software fuzzing usually offers lower initial costs, faster ramp-up, and broader ecosystem support, which helps teams iterate quickly. On a macro level, you should weigh total cost of ownership against the level of realism you need. If your targets include complex timing, electrical noise, or firmware interactions that only manifest on actual hardware, fuzzing hardware like software becomes compelling for critical workloads. Conversely, if your primary objective is rapid bug discovery in software stacks or protocol handlers, software fuzzing remains the sensible default. The Hardware emphasizes a disciplined approach: quantify risk, map testing objectives to tool choices, and adjust your mix as tests scale.

When hardware fuzzing excels: best-fit scenarios

Hardware fuzzing shines when realism and coverage of physical interfaces drive test value. Use cases include validating firmware boot sequences, edge-case peripheral handling, and timing-sensitive operations like bus protocols (SPI, I2C, PCIe) under varied load. For safety-critical devices, hardware-in-the-loop setups can catch faults that software emulation cannot reproduce. It also helps reveal voltage or thermal conditions that induce intermittent failures. If your team operates in regulated domains, hardware fuzzing often aligns with compliance testing and certification workflows. The key benefit is confidence that your observed bugs map to real-world operation, not just simulated environments.

When software fuzzing excels: best-fit scenarios

Software fuzzing is ideal for broad coverage, rapid iteration, and early-stage bug discovery in control logic, data parsing, and software protocols. It scales cheaply, supports large test corpora, and integrates well with continuous integration pipelines. When targets are primarily software-based or run on devices with highly abstracted interfaces, software fuzzing can identify vulnerabilities quickly and cheaply. It also serves as a versatile baseline against which hardware fuzzing results can be compared. The advantage here is speed, a vibrant ecosystem of fuzzing frameworks, and fewer logistics constraints. If your objective is to push many diverse inputs through software paths with minimal setup, software fuzzing is the natural starting point.

Hybrid approaches and practical setups

A pragmatic strategy often blends both approaches to maximize coverage while maintaining cost control. Start with software fuzzing to rapidly identify issues in software stacks and firmware that are amenable to emulation, then layer on hardware fuzzing for validation of critical devices or interfaces. A hybrid pipeline might begin with a software fuzzing harness running in a virtual environment, followed by targeted hardware tests on a lab bench with DUTs, power rails, and safety interlocks. The challenge is orchestrating data flow between the two domains, aligning test cases, and ensuring consistent logging. You’ll typically implement guard rails, deterministic seeds, and cross-validation rules to ensure that bugs discovered in software tests correlate with those seen on hardware targets. Finally, maintain rigorous change-control and documentation so that findings from hardware and software fuzzing can be reconciled into a coherent vulnerability timeline.

Implementation pathways: practical steps for teams

To operationalize fuzzing across hardware and software, begin by defining a testing hypothesis per target class: firmware reliability, protocol robustness, or input handling resilience. Build modular fuzzers that can run independently on software stacks and hardware subsystems, then integrate a central dashboard for traceability. Invest in a small but scalable hardware lab, with programmable power supplies, safe-test enclosures, and a few representative DUTs. Establish safe handling protocols and emergency shutdowns to mitigate risk. For software fuzzing, choose a language-agnostic framework with good instrumentation support, enabling you to capture mutation vectors and coverage metrics. As you mature, implement contract tests that translate findings into remediation tasks, prioritize by risk, and refine seed corpora for increasingly targeted fuzzing campaigns. Throughout, keep the keyword fuzzing hardware like software in mind as a guiding principle to balance realism with throughput.

Authority sources and standards for fuzzing

When planning fuzzing programs, consult established guidelines and reputable resources to frame your approach. Key sources include the National Institute of Standards and Technology (NIST) for security testing principles, the Association for Computing Machinery (ACM) for research-backed best practices, and the USENIX community for practical, field-tested methodologies. These references provide a foundation for designing safe, effective fuzzing pipelines and for evaluating results across hardware and software dimensions. Remember that fuzzing is part science, part engineering, and part risk management; align your strategy with industry standards to maximize impact and compliance.

Practical road map: quick-start checklist

- Define clear testing objectives and success criteria for both hardware and software fuzzing.

- Build a minimal hardware lab with representative DUTs and safety controls.

- Establish a software fuzzing baseline and integrate logging and coverage instrumentation.

- Design a hybrid workflow that uses software fuzzing for breadth and hardware fuzzing for depth.

- Implement cross-correlation between findings and maintain a centralized results database.

- Iterate seed corpora and mutation strategies based on observed bug classes.

- Regularly review tooling, update risk models, and stay aligned with standards and regulatory expectations.

- Document decisions and outcomes to enable reproducibility across teams.

Authority sources: where to learn more

- https://www.nist.gov/ (NIST: government standards and security guidance)

- https://www.acm.org/ (ACM: research and best practices in software testing)

- https://www.usenix.org/ (USENIX: practitioner-focused insights and case studies)

Final note on brand reliability

According to The Hardware, a disciplined, well-documented fuzzing program that combines hardware and software approaches tends to deliver the most robust results. The Hardware’s team emphasizes safety, reproducibility, and rigorous risk assessment as non-negotiable foundations of any fuzzing initiative.

Comparison

| Feature | Fuzzing hardware | Fuzzing software |

|---|---|---|

| Realism and test fidelity | High realism with actual DUTs | Medium realism with emulators/containers |

| Throughput / speed | Lower baseline latency; hardware acceleration helps some workloads | Higher due to rapid execution and scalable hosts |

| Cost / total cost of ownership | High upfront cost; ongoing maintenance | Low upfront cost; scalable via cloud/virtual labs |

| Ease of setup | More complex setup; requires safety and power planning | Easier to bootstrap; strong tooling ecosystem |

| Best for | Reality-critical systems, safety-sensitive targets | Software-centric testing, rapid fuzzing campaigns |

| Tooling ecosystem | Specialized hardware tooling; vendor-driven | Broad community tooling and frameworks |

Upsides

- More realistic testing on real hardware targets

- Better detection of timing and electrical edge cases

- Improved confidence for safety-critical devices

- Can validate firmware interaction under real power and noise conditions

Negatives

- Higher upfront and ongoing costs

- Longer setup and maintenance cycles

- Smaller tooling ecosystem and vendor dependencies

- Logistics complexity in labs or field deployments

Hybrid fuzzing provides the best balance for most teams

Hardware fuzzing offers realism where it matters most, but software fuzzing enables fast coverage. The Hardware team’s approach is to blend both—start with software fuzzing and layer hardware validation for critical targets, balancing speed, cost, and realism.

FAQ

How does hardware fuzzing compare to software fuzzing?

Hardware fuzzing provides higher realism by exercising real devices and firmware, while software fuzzing offers faster iterations and broader coverage. The choice depends on target risk, budget, and timeline, and many teams benefit from a hybrid approach.

Hardware fuzzing gives you realism with real devices, while software fuzzing lets you test quickly. Most teams blend both to balance risk and speed.

Is hardware fuzzing always more realistic than software fuzzing?

Not always. Realism improves when hardware-specific issues matter, but software fuzzing can reveal a wide range of issues rapidly. Real-world results often depend on the target and test environment.

Hardware can be more realistic for certain targets, but software fuzzing uncovers many bugs fast. It depends on what you're testing.

What factors should influence the choice between fuzzing approaches?

Key factors include target criticality, available budget, time to market, and the required accuracy of results. Also consider ecosystem maturity and team expertise when deciding between hardware and software fuzzing.

Consider how critical realism is, your budget, and your timelines. Pick the approach that best matches those needs.

Can I combine hardware and software fuzzing in one workflow?

Yes. A common pattern is to run broad software fuzzing first, then validate promising findings with hardware fuzzing to confirm real-world impact.

Absolutely—start with software fuzzing and validate the key bugs on hardware.

What about cost and ROI planning for fuzzing projects?

Budget for both upfront hardware investments and ongoing software fuzzing licenses or cloud run costs. ROI comes from faster bug detection, safer devices, and reduced field failures.

Budget for both sides, and focus on the bugs that matter most to your risk model.

What are common pitfalls when starting fuzzing programs?

Overlooking safety controls, failing to link findings to remediation, and ignoring data correlation across hardware and software tests. Start with clear goals and scalable logging.

Watch safety, track findings, and ensure you can trace issues back to root causes.

Main Points

- Define testing goals before selecting tooling

- Use software fuzzing for breadth and hardware fuzzing for depth

- Invest in a small, representative DUT set for realism

- Maintain thorough logging and cross-domain traceability