What a Hardware Accelerated GPU Does

Discover how hardware accelerated GPUs speed graphics, video processing, and AI tasks. Learn how GPU offloading works, when to enable it, and setup tips.

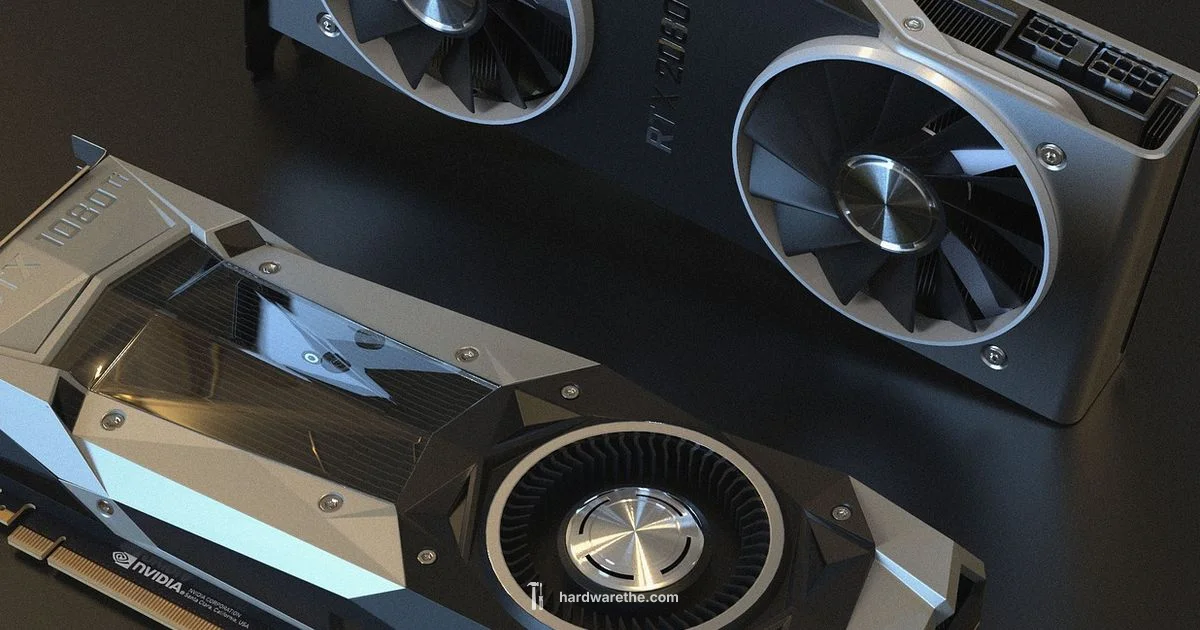

Hardware accelerated GPU refers to using a graphics processing unit to perform compute workloads beyond graphics rendering, speeding up tasks like video encoding, 3D rendering, and AI inference.

What a hardware accelerated GPU does

According to The Hardware, a hardware accelerated GPU offloads parallelizable workloads from the CPU to the GPU, taking advantage of many small processing units running in parallel. This design makes tasks such as rendering complex scenes, encoding video, and running AI inference dramatically faster than CPU-only execution. While the CPU handles general control flow and serial tasks, the GPU excels at data-parallel work: the same operation applied to many data elements at once. The practical effect is smoother graphics in games, faster video edits, and more responsive simulations. In professional environments, researchers and technicians rely on GPUs to accelerate rendering pipelines, batch simulations, and real-time analytics. The key takeaway is that a GPU can act as a powerful parallel compute engine when software supports it, rather than being limited to drawing pixels on a screen.

How GPU acceleration works in practice

GPU acceleration divides work into many small tasks that can be executed simultaneously by thousands of tiny cores. Programs write kernels or shaders that describe operations to apply to large data sets, and the GPU executes many instances in parallel. The data transfer between CPU memory and GPU memory can be a bottleneck, so efficient pipelines minimize transfers and reuse data in GPU memory. Modern APIs and runtimes provide hardware abstraction layers that map code to GPU resources, handle synchronization, and optimize scheduling. You may encounter terms like CUDA or OpenCL for compute, and DirectX or Vulkan for graphics pipelines. In practice, enabling GPU acceleration in a video editor, a 3D renderer, or a machine learning framework typically requires a compatible driver, a supported API, and software that can express the work as parallel tasks. When configured correctly, the GPU handles intensive parts of the workload, letting the CPU coordinate the process and manage I/O.

Core technologies and terminology you should know

Several hardware and software concepts determine how effectively a GPU accelerates tasks. GPU cores execute many small programs in parallel, dramatically increasing throughput for repetitive operations. Tensor cores or matrix engines accelerate AI workloads by optimizing large matrix multiplications. Ray tracing cores or similar hardware accelerate realistic lighting calculations in real time. Memory bandwidth and on-board cache affect how quickly data moves through the GPU. APIs and libraries such as CUDA, OpenCL, DirectX, and Vulkan provide ways for software to request acceleration, manage resources, and handle synchronization. Finally, drivers and runtime systems translate high level code into GPU instructions and can introduce optimizations that shift performance curves depending on the driver version and OS. Understanding these terms helps you diagnose bottlenecks and choose the right hardware for your workloads.

Typical use cases across industries

GPU acceleration touches many areas. In gaming and virtual production, GPUs render complex scenes and deliver high frame rates with stable latency. In video editing and transcoding, hardware acceleration speeds up color grading, encoding, and effects processing. For scientific computing and data analysis, GPUs perform large-scale simulations and rapid data processing that would be impractical on CPUs alone. In machine learning and AI development, GPUs train and infer models much faster by exploiting matrix operations and parallel computation. Engineers also use GPUs for CAD, 3D design, and VR/AR workflows where real time feedback is critical. The common thread across these use cases is parallelizable workloads that benefit from moving compute work from CPU to GPU while keeping control flow on the CPU.

Benefits and tradeoffs you should expect

The main benefits of hardware accelerated GPUs are higher throughput, shorter processing times, and the ability to tackle larger or more complex tasks. For many workflows, GPUs enable real time feedback, smoother playback, and faster iteration cycles. However, there are tradeoffs to consider. GPUs consume more power and generate more heat, which can require better cooling and noise management. Software compatibility matters; not every program supports hardware acceleration equally, and some features may only run on certain APIs or driver versions. There is also a learning curve: developers must restructure algorithms to run efficiently on a parallel architecture. Finally, you may encounter diminishing returns if the workload is not sufficiently parallelizable or if data transfer between CPU and GPU becomes the bottleneck. A well-balanced system and careful software enablement can maximize gains while avoiding common bottlenecks.

How to decide if hardware acceleration is right for you

Start by profiling your workloads and listing the tasks that seem to take the most time on a CPU. If those tasks can be expressed as parallel operations or if your software explicitly offers acceleration options, hardware acceleration is worth exploring. Check your software's documentation for supported APIs and required drivers, then verify that your GPU model and system meet these prerequisites. Consider the rest of the system: CPU speed, memory bandwidth, PCIe lane availability, and cooling. If you routinely work with video editing, 3D rendering, ML, or large simulations, enabling GPU acceleration is likely to provide meaningful benefits. On the other hand, lightweight or mostly sequential tasks may see little improvement. Finally, budget and power constraints matter—supporting hardware acceleration is generally most impactful on mid-range to high-end GPUs with ample VRAM and bandwidth.

Getting started with enabling hardware acceleration in software

Begin by updating your graphics driver to the latest version from the GPU vendor. Next, open the application settings and look for a section labeled hardware acceleration, compute, or performance. Enable the option and restart the program if required. If you use multiple programs, you may need to configure each one individually. After enabling acceleration, run a representative workload and monitor performance, latency, and temperatures. It is wise to run a baseline comparison with the CPU-only configuration to quantify gains. Finally, keep an eye on driver updates and API changes, because new releases can shift performance or compatibility. If issues arise, check for conflicting plugins, verify that data is staged in GPU memory, and ensure that other system components are not bottlenecks.

FAQ

What does hardware accelerated GPU do?

A hardware accelerated GPU performs compute tasks using the GPU instead of the CPU, speeding up parallel workloads such as graphics, video processing, and AI inference.

It uses the GPU to handle compute tasks, speeding up graphics, video processing, and AI workloads.

How can I tell if my system supports hardware acceleration?

Check your GPU model and driver version, then verify software settings for acceleration. Look for compute APIs like CUDA or OpenCL in the app preferences.

Check your GPU and drivers, then look for acceleration options in the software settings.

Will hardware acceleration always improve performance?

No. Benefits depend on the workload, software support, and data transfer efficiency. Some tasks remain CPU-bound or memory-bound despite acceleration.

Not always; gains depend on workload and software support.

Can software use GPU acceleration for AI tasks?

Yes. Many AI frameworks leverage GPU acceleration to train and run models faster, provided you have a compatible GPU, drivers, and compute libraries.

Yes, many AI tools use GPU acceleration when your hardware and drivers support it.

What are tensor cores and RT cores?

Tensor cores speed up matrix operations for AI workloads, while RT cores accelerate ray tracing for real time rendering. Both are specialized GPU components that boost specific tasks.

Tensor cores are for AI math, RT cores handle real time ray tracing.

How do I enable hardware acceleration in common apps?

Open the app settings, locate hardware acceleration or performance options, enable it, and restart if required. Keep drivers up to date for compatibility.

Turn on hardware acceleration in the app settings, then restart if needed and update drivers.

Main Points

- GPU offloads parallel workloads from CPU to speed up tasks.

- Software support and drivers determine the actual gains.

- Data transfer can bottleneck performance, not only compute.

- Enable acceleration in apps and keep drivers updated.

- Know core technologies like tensor and RT cores and relevant APIs.