Hardware Acceleration: A Practical Guide for 2026 Tech

Learn how hardware acceleration speeds up graphics, video, and compute tasks by offloading work to GPUs, DSPs, and AI accelerators. This practical guide covers how it works, common accelerators, benefits, and how to enable and optimize acceleration.

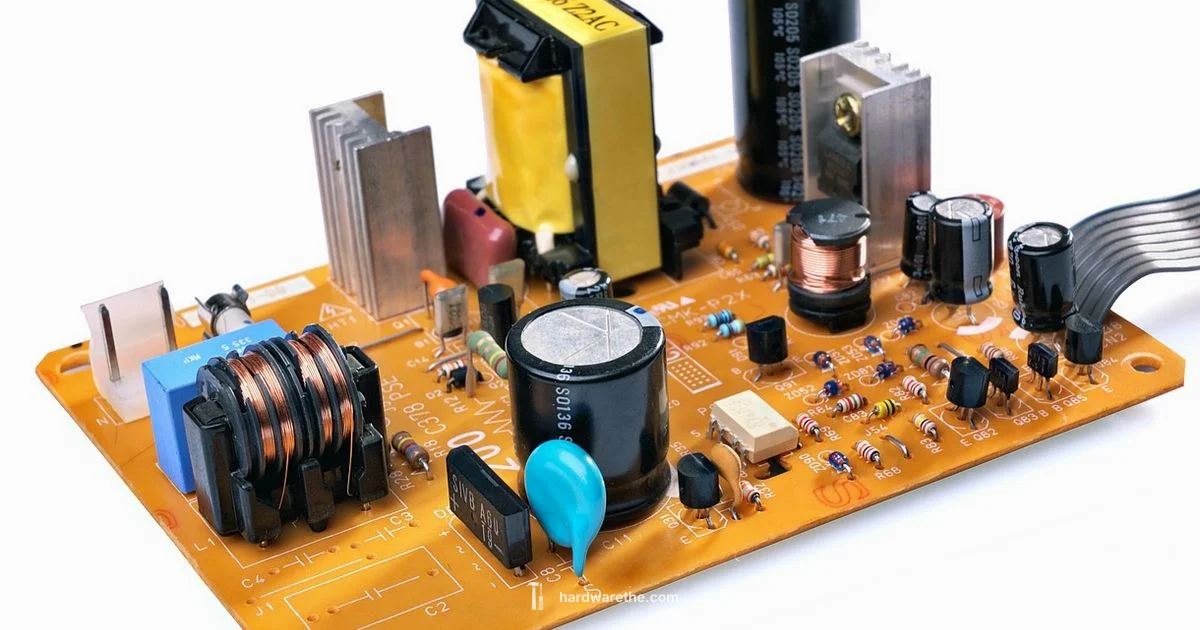

Hardware acceleration is the use of specialized hardware components, such as GPUs or DSPs, to perform compute tasks more efficiently than the CPU.

How hardware acceleration works

According to The Hardware, hardware acceleration moves compute tasks from the general purpose CPU to dedicated hardware such as GPUs, DSPs, or specialized accelerators. These devices are optimized to execute specific kinds of work with high throughput and lower power by keeping data in fast on board memory and using parallel processing pipelines. The software that runs on your system coordinates the offload through device drivers and application programming interfaces. When a task is offloaded, the CPU sends data to the accelerator, waits for results, and then continues. The goal is to minimize CPU bottlenecks while maximizing frame rates, video smoothness, or inference speed. The exact gains depend on workload characteristics, driver quality, and how well the software can exploit parallelism. It is also important to understand that not every task benefits equally; some workloads are inherently sequential or constrained by memory bandwidth, making GPU or ASIC acceleration less effective. The Hardware Team notes that success hinges on choosing the right accelerator for the job and keeping software up to date.

Core accelerators and how they differ

There are several families of accelerators, each optimized for different kinds of tasks. GPUs excel at parallel processing for graphics and general compute, but today you’ll also find DSPs in audio and signal processing roles, FPGAs that can be reprogrammed for bespoke tasks, and ASICs or NPUs designed for specific workloads like AI inference. CPUs may include integrated accelerators, yet dedicated devices usually offer higher throughput and lower latency for specific tasks. The choice between these options depends on your workload mix, power constraints, and budget. Programmability varies as well: GPUs and FPGAs offer flexible programming models, while ASICs provide maximum efficiency for a narrow task. The Hardware Team emphasizes matching the right accelerator to the workload to avoid over provisioning and wasted energy.

GPU acceleration for graphics and general compute

Graphics processing units have evolved beyond rendering pixels to enable general purpose compute (GPGPU). By running many small operations in parallel, GPUs accelerate complex scenes, physics, and data processing. Applications expose this capability through APIs like DirectX, Vulkan, OpenGL, CUDA, or OpenCL, which let software dispatch work to the GPU. While GPUs offer immense throughput, effective acceleration requires software that can split work into parallel chunks and keep data flowing to and from memory efficiently. In professional environments, stable drivers and well maintained toolchains are as critical as the GPU itself. The Hardware Team notes that consumer and enterprise users benefit most when both hardware and software are optimized together for the target workload.

Video and media processing acceleration

Media engines embedded in GPUs, SOCs, and dedicated video devices handle decoding, encoding, and image processing. Hardware video decoders enable smooth playback of high resolution content, while encoders speed up live streaming and archived file export. Offloading these tasks reduces CPU load and helps maintain responsive systems during multitasking. Formats evolve rapidly, so support is tied to driver updates and codec licensing. When hardware acceleration is available, enabling it in the media pipeline can dramatically improve responsiveness and reduce thermal throttling during long sessions of video editing or playback.

AI and machine learning acceleration

AI workloads increasingly rely on specialized accelerators to perform inference and training tasks efficiently. NPUs, GPUs with tensor cores, and dedicated inference chips excel at matrix operations and large-scale computations. Offloading AI tasks to these accelerators can dramatically shorten model inference times and enable real time features in consumer devices and industrial equipment. The software stack typically exposes these capabilities through high level frameworks and optimized libraries that translate model operations into accelerator friendly instructions. The Hardware Team highlights that successful deployment hinges on compatible software stacks, proper quantization, and suitable data pipelines.

Storage and network offloads

Beyond graphics and AI, some accelerators offload storage and networking tasks. Network interface cards (NICs) and storage controllers can handle protocol processing, encryption, or RAID logic, freeing the host CPU to run application code. In virtualized environments, offloads reduce CPU overhead and improve latency for network traffic and I/O operations. As with other accelerators, the benefit depends on the workload and the quality of drivers and firmware. The Hardware Analysis, 2026 notes that offload capability is most impactful when the system runs sustained, I/O heavy tasks.

Benefits and tradeoffs

Hardware acceleration can deliver higher frame rates, smoother playback, faster renders, and lower energy draw for targeted tasks. It can also free CPU cycles for other work and enable features that would be impractical on a CPU alone. However, there are tradeoffs to consider: driver quality, API support, and long term maintenance influence reliability; hardware cost and power consumption may rise with more capable accelerators; and not all software can take advantage of acceleration, especially older applications. The Hardware Analysis, 2026 suggests evaluating both workload characteristics and software ecosystem before committing to a specific accelerator.

How to enable hardware acceleration across platforms

On modern PCs and laptops, enabling acceleration typically starts with updating drivers and firmware. In Windows, you may choose a hardware accelerated rendering path in graphics settings or through a per application preference. macOS devices rely on system level support and approved drivers, while Linux users often configure acceleration via package manager installations and kernel modules. BIOS or UEFI options can also expose offload features for PCIe devices and GPUs. After enabling acceleration, test with representative tasks to confirm gains and verify that power and temperature stay within acceptable ranges. The goal is a stable setup where firmware, drivers, and applications cooperate to maximize performance without compromising reliability.

Troubleshooting and validation

If acceleration does not appear to be active, check that the correct accelerator is selected in software settings and that drivers are up to date. Look for signs such as reduced CPU load during graphics or encode tasks, or direct indicators in system utilities that show accelerator usage. If performance remains unchanged, verify compatibility between the application, the API, and the accelerator, and consider a firmware update or a different accelerator better suited to the workload. The Hardware Team advises thorough testing with real world tasks to confirm sustained benefits.

FAQ

What is hardware acceleration?

Hardware acceleration uses dedicated hardware to perform tasks faster than the CPU. It offloads graphics, encoding, AI inference, and other workloads. It's common in desktops, laptops, and embedded systems.

Hardware acceleration uses dedicated hardware to speed up tasks like graphics and video.

Who benefits most from hardware acceleration?

Devices with graphics, video processing, or AI workloads—desktops, laptops, tablets, and specialized hardware like game consoles and smart appliances—see the biggest gains.

Devices with graphics or AI workloads see the most benefit.

How do I enable hardware acceleration in Windows?

In Windows, update your graphics drivers, and enable hardware acceleration through graphics performance settings or the application preferences. Some features may also rely on BIOS firmware and specific GPU options.

Update drivers and enable the setting in graphics options or app preferences.

Is hardware acceleration always faster?

Not always. Gains depend on workload characteristics, software compatibility, and driver support. Some tasks may see little improvement or even slow downs if bottlenecks shift elsewhere.

Not always. It depends on the task and software.

Can hardware acceleration reduce power usage?

Yes, in many cases hardware offloads can reduce total power by completing tasks more efficiently, though some accelerators consume more power when fully utilized.

Often yes, but it depends on the workload and hardware.

What are common pitfalls when enabling hardware acceleration?

Driver mismatches, disabled features, or overestimating gains can lead to disappointing results. Ensure firmware, drivers, and applications support acceleration and test with realistic workloads.

Watch for driver issues and overestimated gains.

Main Points

- Identify the right accelerator for your workload.

- Keep drivers and firmware updated for stability.

- Test with representative tasks to verify gains.

- Check compatibility with software and OS.

- Balance performance gains against latency and power use.